Pigeon-Driven Development

AI is useful. Alignment is the job.

Scott Spence

CityJS London - April 2026

Press S for speaker notes

Pigeons?

publicdomainpictures.net

University of Iowa Study

- AI uses same associative learning as pigeons

- Pattern matching, not reasoning

- That's not a criticism - it's incredibly useful

University of Iowa Study

A very fast pattern matcher

Not a junior developer

A junior dev remembers stuff

Every session starts from zero

"Would you trust a pigeon?"

The AI Fix: Would you trust a pigeon? Women's T-shirt

Get the tee

Pigeon-Driven Development

- AI is an incredibly fast pattern matcher

- It can do amazing work but still miss the point

- Usefulness is not the same thing as understanding

- My job is not blind trust - it's keeping the model aligned

Scott Spence

AI and Product Engineer

- Svelte LDN co-founder

- Builds tools for AI to use

- Dad

- Cat dad

Not a doomer

Not a booster

A user

AI === Tool

The duality of AI usage meme

I build tools to work with AI

- If I can't see what Claude did, I can't supervise it

- ccrecall captures every Claude Code session into SQLite

- Every prompt, response, and tool call becomes queryable

- Which is how I can put receipts on the next slide

0

sessions

582,507 messages across three recall databases, Nov 13 2025 → Apr 16 2026

The recall trail

The industry moved in phases

- Early 2025: MCP, tools, files, databases, external context

- Then: agents started doing work, not just answering questions

- By 2026: orchestration - coordination, memory, supervision, handoff

- Different names, same core challenge: keep the model grounded in reality

My path followed that arc

- Jan 2025: I gave a talk on MCP servers

- 2025: I kept building MCP tools because I needed better research and context

- Late 2025: agentic coding became part of day-to-day work

- 2026: orchestration became the interesting problem — which is why I built Svortie

- What changed was the scale. What didn't change was the need for supervision

The terminology changed. The loop didn't.

- Give the model context

- Inspect what it produces

- Notice drift early

- Course-correct and realign

- Repeat until the work is actually right

The Big Refactor

XO monorepo — where AI stopped feeling like a toy

What I was doing

- Svelte 5 app still dragging around a Svelte 4 UI package

- 928 errors in total

- Workflow: run

svelte-check, paste 50 errors, apply fixes, repeat - 33 iterations in one session

- These days, this sort of refactor is Tuesday

First lesson

- AI can chew through huge amounts of engineering work

- It will also confidently solve the wrong nearby problem

- Passing tests is not the same thing as correctness

- Copying a local pattern is not architecture

- Judgement stays with the engineer

The real problem was never 'can it code?'

Coding wasn't the breakthrough — alignment, memory, and repeatability were

Daily use changed the question

- It could always pattern-match code

- The real question became: can I keep it aligned?

- Repeated use exposes the same failure modes over and over

- This is when the meta-tooling starts appearing

- Skills, search, sqlite, memory, hooks — all responses to repeated drift

Why the tools started appearing

- Skills: teach recurring project-specific patterns

- ccrecall / pirecall: recover memory outside the session

- sqlite + search: make reality easier to query than guess at

- hooks: force checks before action

- nopeek: keep secrets out of model context

- I didn't build these because they're cool

- I built them because the same mistakes kept happening

The muscle starts forming

- After enough sessions, you stop just reviewing output

- You start recognising failure patterns on sight

- You can often predict the mistake from the symptom

- That instinct is the real compounding

- This is where evaluation becomes more important than generation

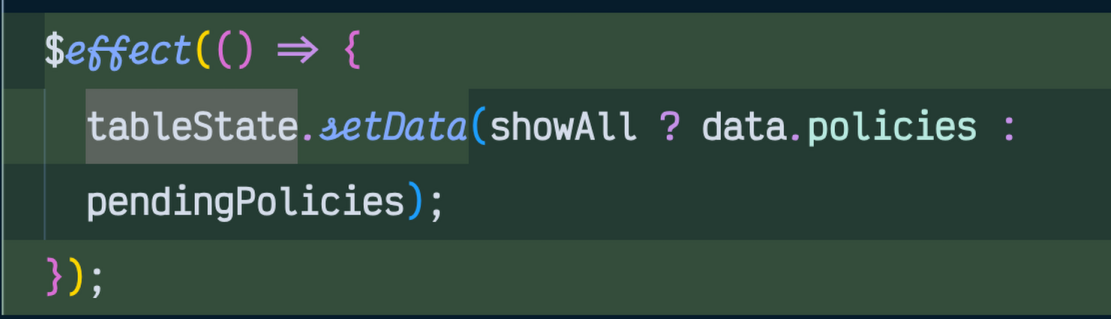

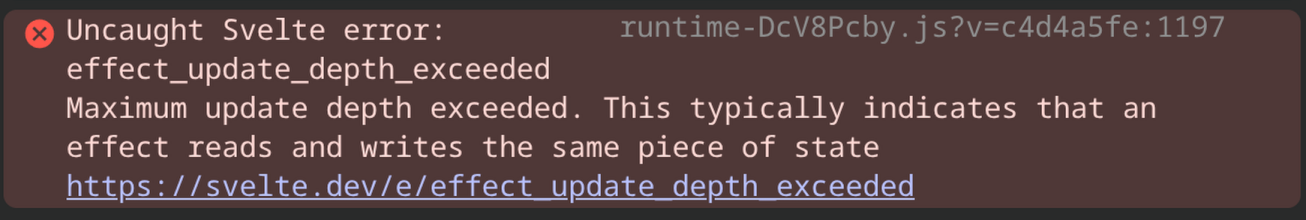

A recurring smell

There are skills warning against this. The model still reaches for it.

If the page freezes, I know where I'm looking

After enough repetitions, the symptom points to the likely mistake.

You need the muscle to understand the outputs really, for anything remotely non-trivial.

What that muscle actually looks like

- Page becomes unresponsive → probably

$effect - Repeated CSS/classes everywhere → extract a shared abstraction

- Huge near-duplicate blocks → stop copying, start factoring

- Test suddenly passes after code changed → check whether the target moved

- Confident answer with no source → probably guessed instead of researched

Svortie + Compounding

The workflow starts to click as a system

What compounding looks like

- Built between contracts in about two weeks

- 446 sessions and 200,390 messages in the personal DB

- Heavy use of skills, search, sqlite, agents, and browser tooling

- The model does the typing

- I steer, validate, and decide

Second lesson

- The model often looks busy before it is actually aligned

- Research beats guessing

- A concise diagnosis is worth more than another giant patch

- You start noticing drift earlier

- This is where vibe coding turns into vibe engineering

Production Work

Cloud Lobsters — real migrations, real deploys, real consequences

One refactor, three moving parts

Prisma → Raw SQL

Auth.js → Better Auth

Deploy cleanup

How the auth migration got lost

Not Just Claude

The harness changes, the workflow doesn't

One afternoon: from problem to tool

Common ways the model drifts

Vibe coding vs vibe engineering

Vibe coding

Vibe engineering

The supervision stack

- Skills for recurring project-specific patterns

- ccrecall and pirecall for memory outside the context window

- search, sqlite, and browser tools so the model can query reality

- hooks and redundancy for things that are actually mandatory

- nopeek to keep secrets out of model context

- The tools exist to make the next session better than the last one

Smart Zone vs Dumb Zone

Smart Zone (~40%)

Dumb Zone (~60%)

Takeaways

AI is a fantastic tool, but it is not self-driving

The job is not writing prompts — the job is maintaining alignment

Real leverage comes from supervision, memory, and guardrails

The failure modes stay weirdly consistent

The new developer skill is knowing when the AI is talking shit

My tools

ccrecall CLI

pirecall CLI

nopeek CLI

Claude Toolkit

Thank you

Would you trust a pigeon?

github.com/spences10

scottspence.com